Learn how to create a graph file for visualization in Gephi

This guide is written in a way that complete beginners can use it. You will be exposed to the command line and the programming language Python. You don’t need to know them as I will explain step by step what to do. I still recommend to learn about them, because it will make your life easier.

I published an analysis of all verified Twitter accounts and a guide how to visualize networks with Gephi. To enable people to collect their own networks and not rely on others, I wrote this article.

What are we going to do?

- Get Twitter API keys

- Download a customized version of the Python script twecoll

- Configure the script

- Run the script to collect the network and create the graph file

While twecoll is capable of several things, in this article I will only explain how to use it to collect the network of accounts someone follows and the network of accounts which follow someone.

Prerequisites: Python and the command line

On OSX and most Linux distributions Python comes pre-installed. On Windows you need to install it yourself. The script uses Python 2.7. Python Download.

To see if you have Python installed and which version, you can use the command line. On OSX and Linux you can use the pre-installed Terminal, on Windows I recommend to use Windows PowerShell, which is pre-installed as well.

Because we will need the command line in multiple situations in this guide, you should make yourself familiar with it. Don’t fear it, it works quite similar to a messenger bot. The command line tells you where it is and you can enter commands by typing them and sending them with enter.

First we type python to see what version is defined as default. You now see the Python version. In my case it’s 2.7.12. The command line looks different as well. There is now >>> in front of the cursor. That’s because we are now inside a Python shell. We could write Python interactively here. But we are going to work with a script and therefore leave the Python shell with the command exit().

If you get an error, that Python is not recognized as a command, you probably need to add it to your path. Because you already have the PowerShell open, simply enter the following command, press enter. Close the PowerSheel and open it again:

[Environment]::SetEnvironmentVariable(“Path”, “$env:Path;C:Python27”, “User”)

Next we learn to move around in the file system to be able to locate our script later on. To do that we use the command cd (change directory). We always see the path of our location in front of the command we are typing. The command line supports auto complete. Simply press tab after you entered the first few characters. You can either use relative or absolute paths after the cd command. To see the content of a directory, we can use the command ls.

Congratulations, now you know some command line basics.

1. Getting Twitter API keys

The Twitter API is the programming interface our script will use to get data from Twitter. To use the Twitter API the client needs to be authenticated. Similar to how you log in to Twitter to use it. To do that we need to register an app first. This is free.

- Go to apps.twitter.com

- Create new app (top right)

- Fill out Name, Description, Website (any valid URL is fine) and check the Developer Agreement after you have read it.

- Switch to the ‘Keys and Access Tokens’ Tab.

We will need the Consumer Key and the Consumer Secret later. Either leave the site open or copy the keys somewhere to use them later. You could change the app to read-only to make sure that you don’t unintentional delete tweets or something like that. (The script is capable of deleting likes.)

Sidenote: Do not share these. People will abuse them. If you still do, like I did above, regenerate them to make the leaked version useless.

2. Download twecoll

For this tutorial I created a modified version of twecoll. I added an export to gdf option. This produces smaller files and Gephi will properly recognize every attribute. I also changed where twecoll saves the API keys. With the modified version they are in the same directory as the script instead of the user folder. Feel free to use the original twecoll. The GML it produces works with Gephi, but you may need to adjust types of values.

Update: I now recommend to use the original version as it got some updates.

Download the original twecoll.

Download my modified version. (Download ZIP, top right)

After downloading, unzip it and place the file twecoll in a folder you will be able to navigate through via the command line. I created a folder twecoll in my user directory and put it there: C:UsersLucatwecoll.

3. Configure the script

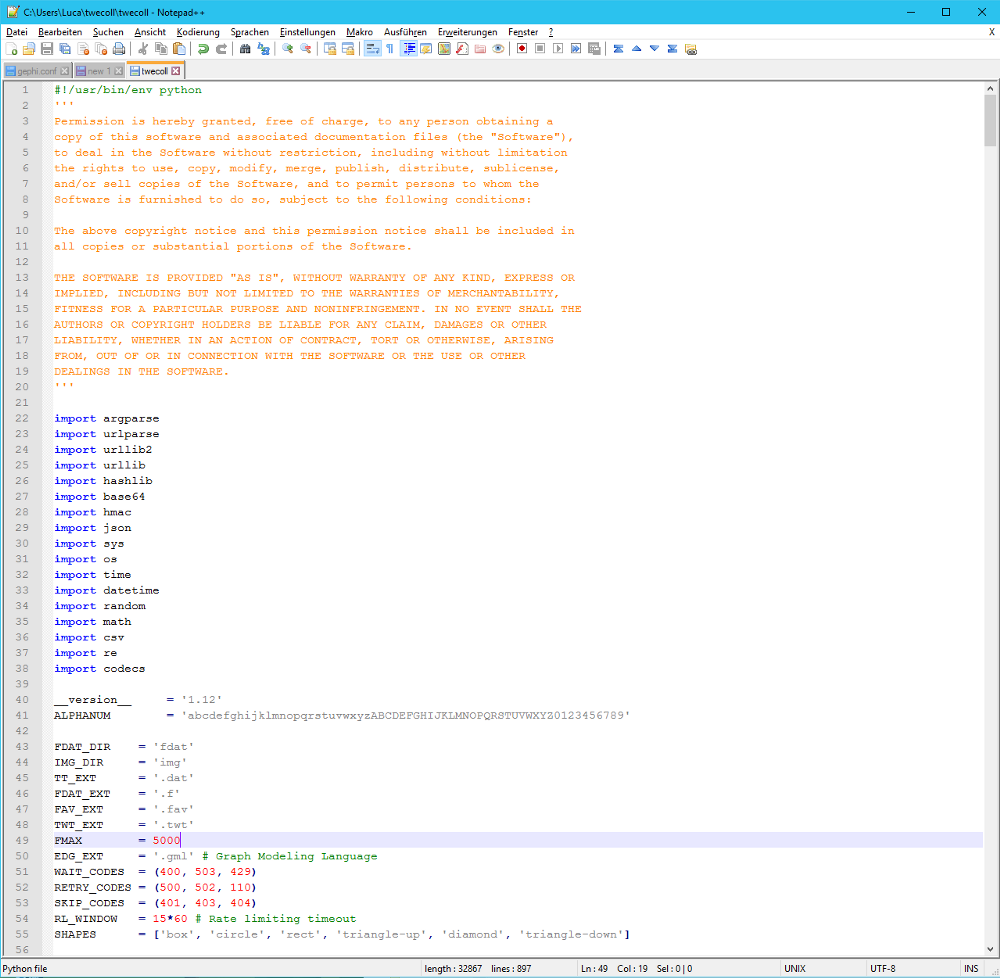

The only option you may want to change is FMAX. This defines how many followings the account may have you collect the data of. Not the account you collect the network of but each of the nodes. For smaller accounts I recommend 5000 because the Twitter API can give you up to 5000 ids with one call. For bigger accounts you probably want to use something smaller to make the network more handleable. It’s a theoretical decision as well. What does it mean when someone follows that many people? Is this still a meaningful relation or is it just noise?

4. Run twecoll to collect data

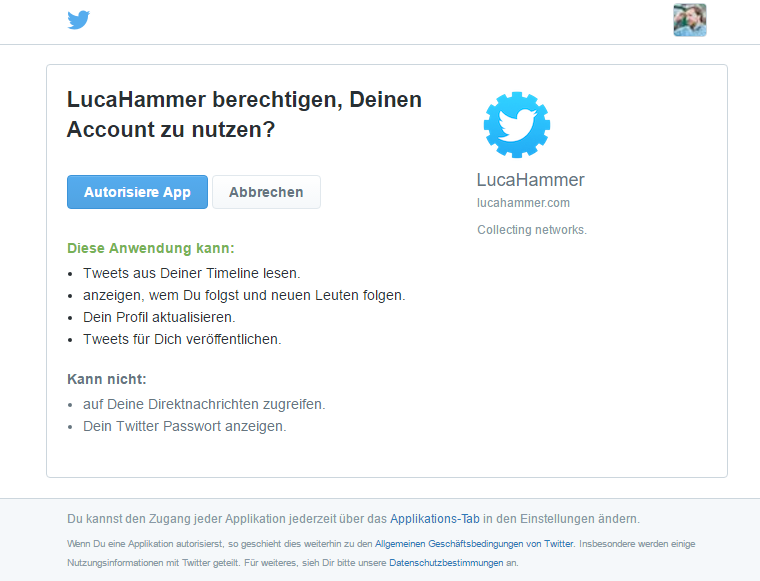

When the script runs for the first time, it will ask you for the consumer key and consumer secret. These are the two strings we got when we created the Twitter app. For future uses this isn’t necessary because twecoll saves the data in a file with the name .twecoll. If you want to start again, simply delete that file. If you use my modified version, it will be in the same directory, else it will be in the user directory.

We will use the script with the init, fetch and edgelist arguments.

First run

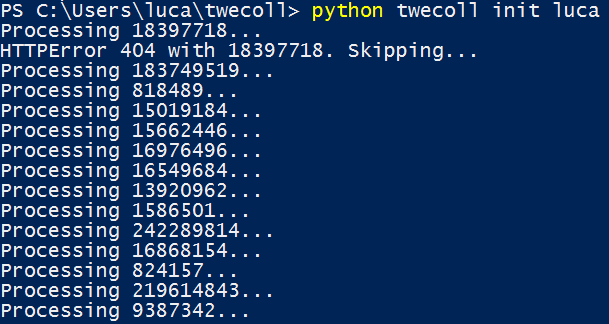

We need to cd to the location where we put the twecoll file. In my case I only need to enter cd twecoll because that’s the folder where I put the script. To run the script we need to tell the computer that Python should be used, then we say that the script should be run and finally we add the arguments for the script. For example python twecoll init luca. Because this is the first run we will be prompted to enter the consumer key and secret of our twitter app. The script will then generate an URL we need to open with a web browser, where we authorize the script to access our account. Twitter then gives us a PIN which we give the script. I then starts with the data collection.

Don’t use ctrl+c to copy in powershell. ctrl+c aborts the the running process in PowerShell. This is useful to stop the script at any time. But will make troubles when you want to copy the URL. You can either use the menu by clicking on the PowerShell symbol at the top left or you press enter to copy the highlighted text. If nothing is highlighted Enter will send the command you are writing at the moment. To paste text you can either use the menu or you simply click your right mouse button.

You can use python twecoll -h to see all available commands. Use python twecoll COMMAND -h to get help for a certain command. You need to replace COMMAND with for example init. I will give you more information on the commands you will be using to collect Twitter networks.

init command

This initalizes the data collection process. You need at least one argument to use it and that’s the screenname of the person you want to collect the network of: python twecoll init luca

Per default twecoll init will collect the list of people the account follows. If you want to look at the followers, you need to add the -o argument or –followers.

It should look like this: python twecoll init luca -o

Or: python twecoll init luca –followers

The init command creates a file called SCREENNAME.dat with information about the accounts followed by or following the account you specified. It will also create a folder img, if doesn’t exist already, and put the avatars of the accounts it collects the data of there.

Once the init command finished collecting all accounts, it will write ‘Done.’.

fetch command

The fetch command goes through all the accounts collected by init and collects the IDs of the accounts followed by each of them. Again, we need to specify for which account we want to fetch the followings of their followings/followers.

In my example I would enter: python twecoll fetch luca

If it doesn’t exist, it creates a folder ‘fdat’ and for each account it collects the following IDs, it creates a file with the ID of the account followed by .f as the name. Inside it saves the IDs, one per line. Without additional information, because only those are relevant which exist in the .dat file.

The way fetch works makes it quite robust. If it stops for whatever reason (no internet, computer turned off, manually stopped) you can restart it with the same command and it will take up again where it stopped.

Sidenote: In the PowerShell/Terminal you can press the arrow keys to move through your command history.

If the .f file for a certain ID already exists, the script will skip that ID. This enables the pausing of data collection and helps when you create multiple networks where the same IDs are needed. But over time the files may be outdated. I recommend to delete the directory if you didn’t use the script for some time.

edgelist command

After we got all the necessary data, we need to combine it. We do that by using the edgelist command. Per default it creates a .gml file and tries to create a visualization with the Python package igraph. I don’t use igraph. If it isn’t installed, the script will skip this and print “Visualization skipped. No module named igraph”. This is fine. The .gml is still created.

I like to use the -m or –missing argument to include accounts where the script was unable to collect data on: python twecoll edgelist luca -m

If you use my modified version, you can use the -g or –gdf argument to create a .gdf file: python twecoll edgelist luca -g -m

The order of the arguments is irrelevant.

You now have eiter a SCREENNAME.gml or SCREENNAME.gdf file in the directory of the script, which you can open with Gephi or another tool that supports these formats.

I recommend my guide how to visualize Twitter networks with Gephi, which starts where you are now:

HTTPError 429: Waiting for 15m to resume

Welcome to the world of API limits. Twitter allows 15 API calls per 15 minutes per user per app. Read more on their API rate limits. The script waits for 15 minutes whenever twitter returns an error, that the limit is reached. Depending on the amount of followings/followers it can take several hours to days to collect all the data. Because of that I run the script on a Raspberry Pi 2 to not have to leave my computer running all the time.

Update: Thanks to a comment by Jonáš Jančařík, I was able to improve the twecoll code to collect the initial list of accounts with up to 100 fewer API calls. The modified version linked above already has the improvement. I created a pull request for the original version as well so it should be soon available to everyone.

Leave a Reply